Kernel Panic, No Display, and NVIDIA Drama - A Regular Day in DevOps

I am a Python/DevOps Engineer. Skilled with hands-on experience in Python frameworks, system programming, cloud computing, automating, and optimizing mission-critical deployments in AWS, leveraging configuration management, CI/CD, and DevOps processes. From Nepal.

Let me tell you about this week(Obv. mine).

I pressed the power button on our office’s Ubuntu server. Fans started spinning. CPU light turned on. Everything sounded normal. But the monitor? Completely black. Nothing. Not even the BIOS screen showed up.

Great. Just great.

First Problem: No Display At All

Okay, so picture this. The machine is ON. I can hear it running. I can even see the CPU running (due to the awesome-looking LEDs😁). But the screen shows absolutely nothing. Not a single pixel.

I got scared. I thought the motherboard was dead. It's a Gigabyte board, and well... Gigabyte can be dramatic sometimes.

I tried everything. Different monitor, different cables, different ports. Nothing worked.

Then I thought, let me just leave it alone for a bit. Sometimes electronics just need a break. Like me.

So here's what I did:

Turned it off completely

Unplugged the power cable from the wall

Held the power button for 20 seconds (this drains leftover charge from the board)

Went to the washroom for a nature call

Came back after 30 minutes

Plugged it back in. Pressed power.

Display came on. Just like that.

I know it sounds like magic but it's actually a real thing. Motherboards hold residual charge in their capacitors. Sometimes that charge gets stuck in a weird state and the board refuses to POST. Draining it fully resets everything.

Okay cool. Hardware is alive. But...

Second Problem: Kernel Panic

Instead of my normal login screen, I got this:

KERNEL PANIC!

Please reboot your computer.

VFS: Unable to mount root fs on unknown-block(0,0)

For those who haven't seen this before, this is basically the Linux kernel saying "I woke up and I have no idea where the hard drive is. I give up."

That unknown-block(0,0) part means the kernel can't find ANY disk. Not because the disk is broken. But because it doesn't have the right drivers loaded to see the disk. The initramfs (a tiny filesystem that loads first and contains these drivers) was either missing or broken.

The Save: Booting an Older Kernel

Here's something beautiful about Ubuntu. It keeps your old kernels in the boot menu. So even when the latest one breaks, you can go back.

I rebooted, held Shift to open the GRUB menu, picked Advanced options, and selected the previous kernel.

It booted perfectly. Everything was fine.

So the disk was fine. Ubuntu was fine. It was just the new kernel that was broken.

Now I needed to find out why.

The Investigation

First thing I checked. What kernels are installed?

dpkg --list | grep linux-image

And I spotted it immediately:

ii linux-image-6.14.0-37-generic ... (working kernel)

iF linux-image-6.17.0-14-generic ... (broken kernel)

See that iF? The i means installed. The F means Failed to configure. The kernel package was downloaded and placed on the system, but something went wrong during setup. The initramfs was never built properly. That's why the kernel couldn't find the disk.

But what caused the failure?

The Root Cause: NVIDIA Said No

I ran this to try and fix things:

sudo apt --fix-broken install

And the error told me everything:

Error! Bad return status for module build on kernel: 6.17.0-14-generic

dkms autoinstall on 6.17.0-14-generic/x86_64 failed for nvidia(10)

There it is. My NVIDIA driver (version 575) could not compile against kernel 6.17. When Ubuntu installs a new kernel, it uses DKMS to rebuild all third-party drivers (like NVIDIA) for that kernel. NVIDIA's build failed. That failure broke the entire kernel installation chain. And that left me with a kernel that was half-installed and couldn't boot.

This is actually super common. Ubuntu's HWE (Hardware Enablement) kernels move fast. NVIDIA drivers don't always keep up. The new kernel lands, NVIDIA can't build against it, and boom, broken boot.

The Fix

Since this is a server, I don't need the bleeding edge kernel. Stability matters more. So the plan was simple. Remove the broken 6.17 kernel and stick with 6.14 which works perfectly.

But there was a small headache. Dependency chain. Package A depends on Package B which depends on Package C. You can't just remove one. You have to go in order.

The trick is to remove the meta-packages first, then the actual kernel:

# This one goes first. Breaks the dependency chain

sudo dpkg --force-remove-reinstreq --purge linux-generic-hwe-24.04

# Now these will work

sudo dpkg --force-remove-reinstreq --purge linux-headers-generic-hwe-24.04

sudo dpkg --force-remove-reinstreq --purge linux-image-generic-hwe-24.04

sudo dpkg --force-remove-reinstreq --purge linux-headers-6.17.0-14-generic

sudo dpkg --force-remove-reinstreq --purge linux-image-6.17.0-14-generic

Then clean up:

# Fix any leftover broken state

sudo apt --fix-broken install

# Remove old kernel configs that are just sitting around

dpkg --list | grep "^rc" | awk '{print $2}' | xargs sudo dpkg --purge

# Update the boot menu

sudo update-grub

And one more important step. Stop Ubuntu from pulling kernel 6.17 again on the next update:

sudo apt-mark hold linux-image-generic-hwe-24.04 linux-headers-generic-hwe-24.04 linux-generic-hwe-24.04

Reboot. Clean boot. Everything works. Server is back.

Later, when NVIDIA releases a driver that supports kernel 6.17, I can unhold and upgrade:

sudo apt-mark unhold linux-image-generic-hwe-24.04 linux-headers-generic-hwe-24.04 linux-generic-hwe-24.04

sudo apt upgrade

Things I Want You to Remember

Always keep at least two kernels. Ubuntu does this by default. Don't mess with it. That old kernel is your backup plan when things go wrong.

Learn the GRUB menu. Hold Shift during boot. It lets you pick which kernel to boot. This one trick has saved me more times than I can count.

NVIDIA and new kernels don't always get along. On a server, ask yourself. Do I really need the latest HWE kernel? If not, stick with what works.

Know your dpkg flags.

ii = Installed and configured (all good)

iF = Installed but FAILED to configure (this is your problem)

rc = Removed but config files still there (needs cleanup)

unknown-block(0,0) is not a dead disk. It means the kernel doesn't have the drivers to see your disk. The initramfs is broken. Boot an older kernel and fix it from there.

The power drain trick works. No display at all? Unplug everything, hold the power button for 20 seconds, wait a while. It's not broscience. Capacitors really do hold charge that can cause weird behavior.

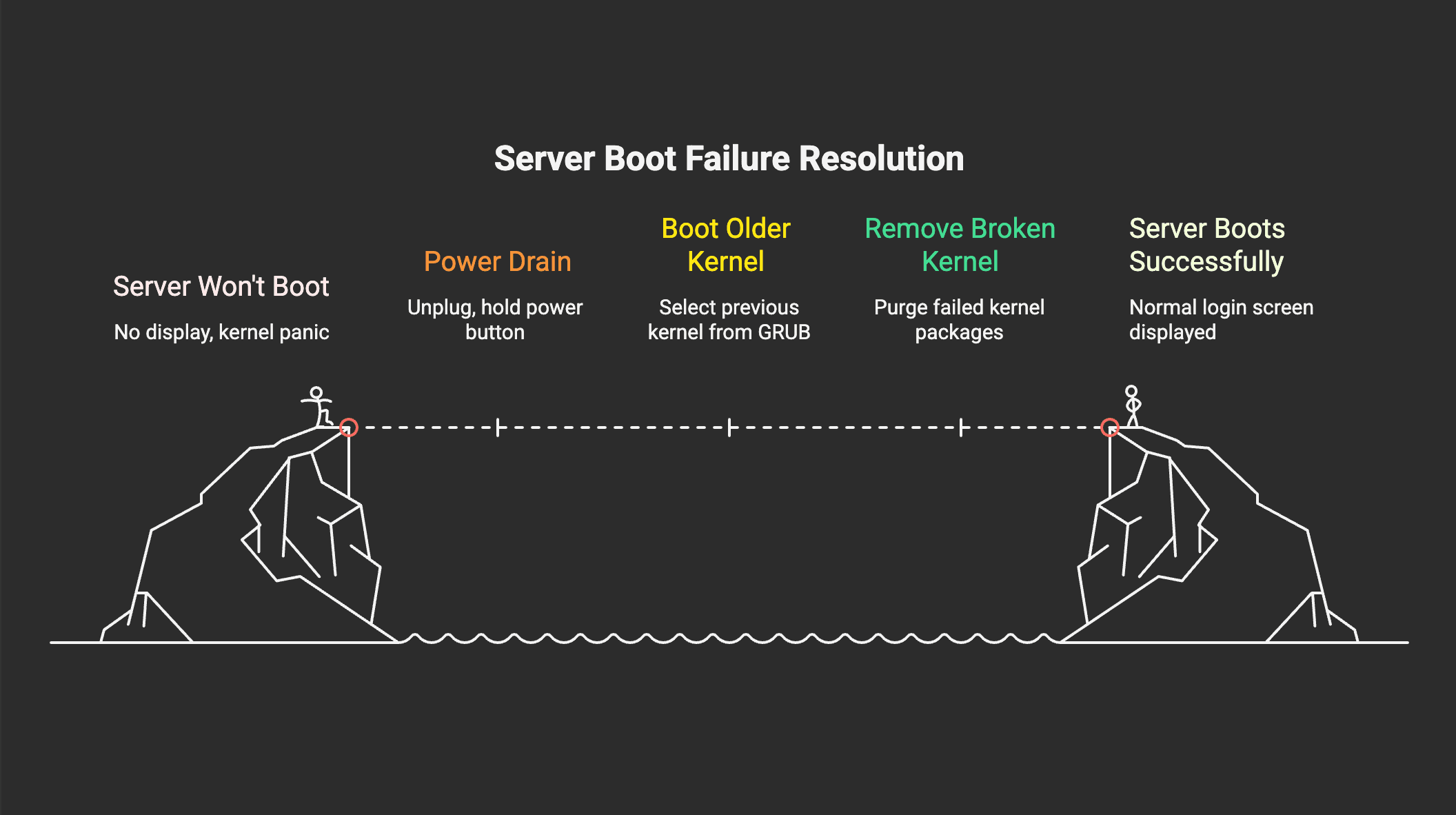

Quick Summary

Server wouldn't start. No display, fixed by draining power and waiting. Then Then kernel panic, caused by NVIDIA driver failing to build against kernel 6.17. Fixed by booting older kernel from GRUB, removing the broken kernel packages, and holding HWE updates until NVIDIA catches up.

Day well spent? Debatable. But hey, at least the server is running and I got a blog post out of it.

Tyastai haina ra?(Nepali😁) You always learn the most when things break.

If you hit something similar, hope this saves you some hours. And no, reinstalling Ubuntu is never the answer (I’ve done that a lot). I don't do that here. I debug.